Last updated on August 23, 2021

Data and Analytics Terminology 101: 33 Terms You Should Know

By Sharon Rehana

Data and analytics can be complex to understand, especially with so many terms and buzzwords constantly being thrown out. But it doesn’t have to be. Here are 33 terms commonly used in the data and analytics space, along with their definitions, to help you get started.

It can be intimidating to have a conversation about data and analytics, especially when there are so many different directions the conversation can go. What does big data even mean? What exactly is the difference between predictive and prescriptive analytics, and how does it apply to what you do? Understanding the jargon will take you one step closer to understanding how you can use your data to make more informed decisions within your organization.

Going back to basics, in this blog, we’ll define some commonly used terms in the data and analytics space. This list is by no means exhaustive, but it gives you a good starting point into the world of data and analytics.

Artificial intelligence (AI) is a simulation of human intelligence processes by machines. It combines computer science with robust datasets to enable problem-solving using the rapid learning capabilities of machines.

Augmented intelligence is a design pattern for a human-centered partnership model of people and artificial intelligence used to enhance cognitive performance, including learning, decision making, and new experiences. The combination of human intuition and artificial intelligence is powerful and can help mitigate perceived risk with purely machine-driven AI.

Big data refers to large and complex datasets containing structured and unstructured data, arriving in increasing volumes and velocity. Big data is relative — what was big ten years ago is no longer considered big today, and the same will be true ten years from now. The point here is that the data is big enough to require special attention with regard to storing, moving, updating, querying, and aggregating it.

Business glossary is a repository of information that contains concepts and definitions of business terms frequently used in day-to-day activities within an organization — across all business functions — and is meant to be a single authoritative source for commonly used terms for all business users. Business glossaries are used to build consensus in organizations and are great for getting new team members up to speed on your organization’s jargon and lexicon of acronyms.

Business intelligence (BI) leverages software and services that help business users make more informed decisions by delivering reports and dashboards to help them analyze data and actionable information.

Cloud computing is a service provided via the internet where an organization can access on-demand computing resources from another organization under a shared service model. Cloud computing allows organizations to avoid the large upfront costs and ongoing maintenance associated with procuring, hosting, and managing their own data centers. Users can effectively rent compute, network, and storage resources for a period and only pay for the services as long as they are using them. This allows for maximum flexibility to scale up and scale down resources quickly and on demand.

Data architecture is the plan and design for the entire data lifecycle for an organization, starting when data is captured, going all the way to when value is generated from data through analytics.

Data catalog is the pathway — or a bridge — between a business glossary and a data dictionary. It is an organized inventory of an organization’s data assets that informs users — both business and technical — on available datasets about a topic and helps them to locate it quickly.

Data democratization is the process of providing all business users within an organization — technical and non-technical — access to data and enabling them to use it, when they need it, to gain insights and expedite decision-making.

Data dictionary is the technical and thorough documentation of data and its metadata within a database or repository. It consists of the names of fields and entities, their location within the database or repository, detailed definitions, examples of content, descriptions for business interpretation, technical information like type, width, constraints, and indexes, and business rules and logic applied to derived or semantic assets.

Data engineering is the process and practices needed to transform raw data into meaningful and actionable information. Common data engineering tasks involve data collection, extraction, curation, ingestion, storage, movement, transformation, and integration.

Data ingestion is the process by which data is loaded from various sources to a storage medium — such as a data warehouse or a data lake — where it can be accessed, used, and analyzed.

Data integration is the process of connecting disparate data together for analysis or operational uses.

Data governance is the way an organization ensures that its data policies, practices, and processes are followed. When executed properly, a governance program should also clearly define who ultimately owns the data, who stewards it when something needs to be corrected or maintained, and who uses it to ensure that downstream impacts of change are monitored. A data governance framework defines how you will implement a data governance program. It creates a single set of rules and processes around data management, and in turn makes it easier to execute your data governance program.

Data lake is a central data repository that accepts relational, structured, semi-structured, and non-structured data types in a low-to-no modeling framework, used for tasks such as reporting, visualization, advanced analytics, and machine learning. A data lake can be established on premises (within an organization’s data centers) or in the cloud.

Ready To Unlock Data-Driven Decision Making?

Data management is the way you carry out your data strategy. It is the plans, policies, procedures, and actions taken on data assets in an organization throughout the data lifecycle to create valuable information repeatable, and at scale.

Data mining is the practice of systematically analyzing large datasets to generate insightful information, uncover hidden correlations, and identify patterns.

Data model is a communication and consensus-building tool, where a person or an organization creates a visual representation of how things relate together and how processes behave in the real world. They are used to translate business requirements into technical requirements, especially in database and system design.

Data quality is a measure of the condition of data based on factors such as accuracy, completeness, consistency, and reliability. Generally, data is of sufficient quality when it is fit for its intended uses in operations and decision making.

Data replication is the process and activities necessary to make a copy of data stored in one location and move it to a different location. This replication activity improves accessibility to data and protects an organization from a single point of failure that can cause a data loss event.

Data science is the field of applying math and statistics, scientific principles, domain expertise, and advanced analytics techniques — such as machine learning and predictive analytics—to extract meaningful and actionable insights from data and to use them for strategic decision-making.

Data strategy is a defined plan that outlines the people, processes, and technology your organization needs to accomplish its data and analytics goals. A data strategy is designed to answer exactly what you need in order to more effectively use data; what processes are required to endure the data is high quality and accessible; what technology will enable the storage, sharing, and analysis of data; and the data required, where it’s sourced from, and whether it’s of good quality.

Data warehouse is a highly governed centralized repository of modeled data sourced from all kinds of different places. Data is stored in the language of the business, providing reliable, consistent, and quality-rich information.

Data visualization is the representation of data and information in the form of charts, diagrams, pictures, or tables to communicate information in a way consistent with how the human brain interprets information and identifies outliers quickly, accurately, and precisely.

Descriptive analytics tells you what happened in the past by looking at historical data and finding patterns. Most organizations with some level of maturity on their analytics journey are already doing some degree of descriptive analytics.

Diagnostic analytics helps organizations understand the “why” behind the “what” of descriptive analytics. It enables better decision making, as well as generates better predictive use cases through that understanding.

Embedded analytics places analytics at the point of need — inside a workflow or application — and makes it possible for users to take immediate action without needing to leave the application to get more information to make a decision. Embedded analytics can work the opposite way as well, where organizations put an operational workflow inside an analytics application to streamline processes. An example of this is an analytics application that identified product out-of-stock trends, having a mechanism to allow a planner to adjust the reorder threshold within the analytics dashboard for that product.

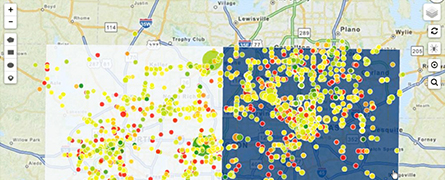

Geospatial analytics gathers, manipulates, and displays geographic information system data and imagery, including GPS and satellite photographs, to create geographic models and data visualizations for more accurate modeling and prediction of trends. Putting data in the context of its geography can lead to insights that are not immediately visible when analyzed with traditional data visualization methods.

Machine learning is a practical application of AI, where a system uses data and information to learn and improve over time by identifying trends, patterns, relationships, and optimizations.

Predictive analytics is a form of advanced analytics that determines what is likely to happen based on historical data using statistical techniques, data mining, or machine learning.

Prescriptive analytics pertains to true guided analytics where your analytics is prescribing or guiding you toward a specific action to take. It is effectively the merging of descriptive and predictive analytics to drive decision-making.

Supervised learning uses labeled data — data that comes with a target or identifier such as a name, type, or number — and guided learning to train models to classify data or to make accurate predictions. It is the simplest of the two types of machine learning, the most used, and the most accurate because the learning is guided using known historical targets that you can plug in to get the outcome.

Unsupervised learning uses unlabeled data — data that does not come with a target or identifier — to make predictions. It uses artificial intelligence algorithms to identify patterns in datasets and doesn’t have a defined target variable. Unsupervised learning can perform more complex tasks than supervised learning, but has the potential to be less accurate in its predictions, and possibly have reduced interpretability with additional analysis.