Last updated on March 9, 2026

The Essential Guide to dbt Health Checks

By Jerry Hutchison

If you haven’t taken a serious look at the quality and performance of your dbt project in the last year, it’s time to take action. A dbt health check is crucial — even for the most proficient and technically adept organizations — offering an opportunity to gain valuable insights into areas of improvement and optimization, far beyond just routine procedure. This article explores how a dbt health check ensures that your data pipelines are not only robust and efficient but also aligned with best practices and the latest dbt features, helping you to continuously evolve and improve your data management capabilities.

Thousands of companies rely on dbt for data management and transformation. However, as dbt projects grow in size and complexity, inefficiencies and suboptimal practices can emerge, leading to collaboration difficulties, longer times for deploying changes, more time-consuming debugging, and increased risk of unreliable data. These challenges can hinder effective data use and decision-making. A dbt health check offers a targeted solution to these issues, streamlining your data operations and enhancing decision-making across the organization. In this blog, we discuss:- What is a dbt Health Check? ↵

- Risks of Not Doing Regular Health Checks ↵

- Our 6-Step Approach to a dbt Health Check ↵

What is a dbt Health Check?

A dbt health check is a review designed to assess and improve the health of your dbt project and surrounding analytics environment. It doesn’t have to be a major ordeal, but it should systematically look at code quality, complexity, documentation, testing, and development practices. The goal is to identify areas for improvement and ensure that the dbt project is well-maintained, efficient, and scalable for data transformation and analytics workflows. Even the most proficient and technically adept organizations can benefit from these check-ups, as they provide valuable insights into potential areas for improvement and optimization. This ensures your data pipelines remain robust, efficient, aligned with best practices, and up to date on dbt’s latest features.Risks of Not Doing Regular dbt Health Checks

When a dbt project is first created, it is easy to maintain structure and clear development practices. However, as your data infrastructure expands to include more data sources and models, certain areas of your dbt implementation might need extra attention to maintain its initial performance standards. Some challenges that arise include:- Failure to Use New Features: dbt is constantly evolving, introducing new features to boost efficiency and capabilities. Staying updated with these enhancements is crucial to harness their full potential for optimization and improved performance.

- Lack of Standard Practices: Adherence to a standard style guide, naming convention, or modeling conventions is key. Deviations from these standards often occur over time, and this can lead to inconsistencies in the codebase, making the project more challenging to navigate and maintain, potentially introducing errors, and affecting code quality.

- Redundant Work: It’s important for teams to build on each other’s work instead of repeating tasks. Avoiding redundancy saves time and resources, allowing the team to concentrate on more strategic and innovative tasks.

- Onboarding and Documentation Challenges: As projects grow, onboarding new users and navigating documentation can become more complex. Ensuring clear and accessible documentation is vital for efficient team expansion and knowledge transfer.

Ready for a dbt health check?

Talk to an expert about your dbt projects and environment.Our 6-Step Approach to a dbt Health Check

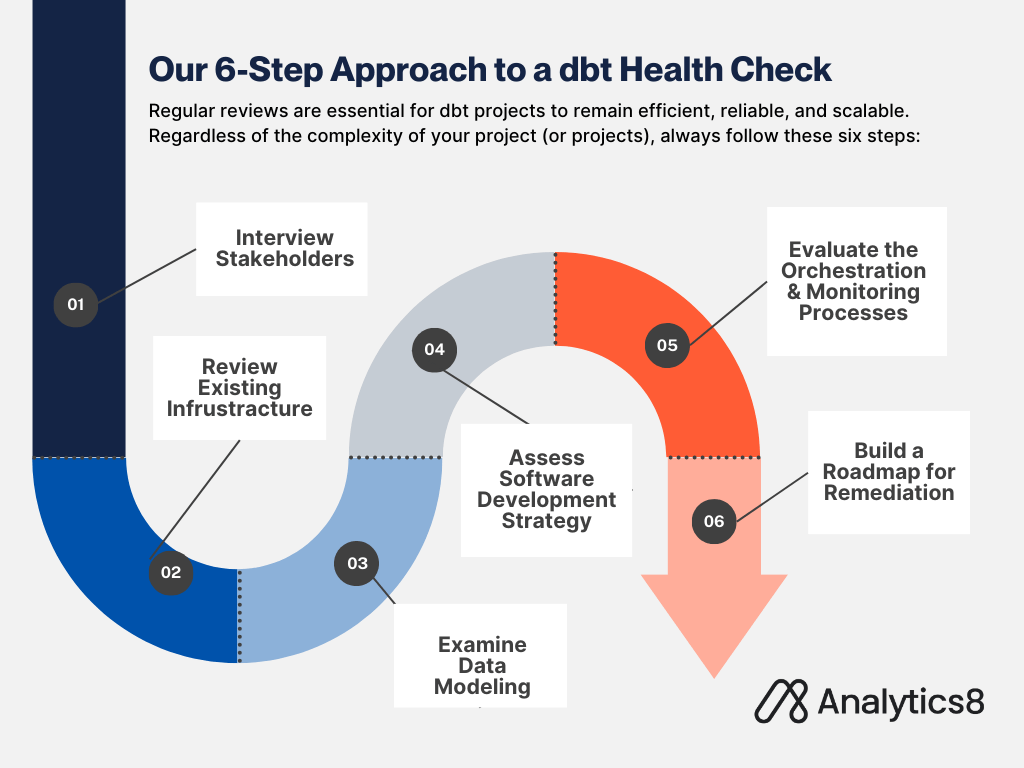

A dbt health check is not a one-size-fits-all process. A highly complex project (or projects!) with hundreds of models and dozens of contributors will face different needs than a smaller project that was more recently started. However, regardless of complexity, a dbt health check should encompass the following 6 steps.

Step 1: Conduct Stakeholder Interviews

Begin your dbt health check by interviewing stakeholders so that you can gain a crucial high-level understanding of the project’s impact and areas for improvement:- Interview dbt developers to assess their daily experience and understand the health of the project and development environment from the user’s perspective. Ask questions such as:

- Can you work creatively and efficiently?

- Do you experience a productive ‘flow state’?

- How often are you confused by the data project, or are you afraid of introducing errors into the data pipeline?

- Talk to your data team to identify technical problems by digging into the places that are most frequently causing pain. Ask questions such as:

- What pipelines are breaking most often?

- Which sources have the most errors?

- What dashboards are not reliable?

- Engage with the ultimate consumers of the data products such as executives or business leaders. This will provide a broader view of the project’s impact and guides future direction, potentially offering contrasting perspectives to developers’ feedback. Ask questions such as:

- What data do you rely on daily?

- What essential data or information is missing?

- How reliable and trustworthy do you find the data?

Step 2: Review Existing Infrastructure

Thoroughly evaluate the core infrastructure of your analytics stack: your dbt and data warehouse environments, orchestration and ingestion tools, whether you are using dbt Platform (formerly dbt Cloud) or self-managed cloud infrastructure.- Data Warehouse Environments: Assess the setup and configuration. Focus on how access is managed, such as the use of role-based access for sensitive data and administrator privileges. Examine environment sizing and whether the warehouse is optimized for your specific usage needs. If possible, check if the data warehouse resources are efficiently scaled up or down to control costs.

- dbt Engine: Check if the environment is utilizing the latest version of the dbt engine (Core or Fusion) to take advantage of new features and improvements and ensure the code is fully compatible with other packages and tools in the data stack. For projects that are still using the Python-based Core engine, evaluate the cost-benefit of moving to the Rust-based Fusion engine and develop a migration plan if appropriate.

- Orchestration and Ingestion Tools: Investigate the reliability and efficiency of the data ingestion and orchestration processes. Determine the stability of ingestion pipelines and whether they are prone to frequent breakdowns. Evaluate the nature of the code used in these pipelines, distinguishing between custom-written ‘spaghetti code’ and commercial off-the-shelf solutions, and assess their impact on the stability and quality of data entering the data warehouse.

Step 3: Examine Data Modeling

Examine the dbt code and data modeling process to ensure efficiency and adherence to best practices:- Data Staging: Assess how data is staged in the data warehouse. Ensure proper use of source models for raw data from external sources and the implementation of staging layers for initial data transformations. For large datasets, check if incremental loading techniques are used to efficiently update new or changed records.

- SQL Code Quality: Review the dbt SQL code for simplicity and readability. Confirm the use of CTEs, a consistent style guide, and naming conventions. These practices improve code readability, facilitate collaboration, and enhance execution efficiency.

- Code Reuse: Evaluate the use of macros for simplifying complex SQL logic and packages for encapsulating related dbt objects. This approach streamlines development, improves maintainability, and promotes team collaboration.

Step 4: Assess Software Development Strategy

Assess how your team uses dbt in line with software development best practices:- Version Control: Review your version control strategy. Ensure you’re leveraging features like Slim CI in dbt Platform, which automates build and gate checks with every pull request.

- Continuous Integration and Deployment (CI/CD): Confirm the presence of a consistent release model, such as blue-green deployment. Check for regular deployment schedules and consistent use of CI/CD practices to maintain sanity and coherence in your development process.

Step 5: Evaluate the Orchestration and Monitoring Processes

Focus on how your data pipelines are orchestrated and monitored:- Orchestration Process: Review how data pipelines are triggered and managed. If you’re using dbt Platform, check if the built-in scheduler is configured to meet your needs and whether API web hooks are set up for running jobs based on other pipeline tasks’ completion. For those using additional orchestration tools, ensure these are fully integrated with dbt and provide a clear overview of the data pipeline’s lineage.

- Monitoring: Assess the monitoring processes in place. If you are using dbt Platform, use dbt Explorer to analyze when models start, finish, and highlight any bottlenecks. If using external tools, confirm that they provide robust monitoring capabilities, allowing for safe failure and efficient troubleshooting.

Step 6: Build a Roadmap for Remediation

After a comprehensive analysis of your dbt implementation, you’ll need a remediation roadmap. This roadmap should clearly outline the recommendations, implementation timeline, and how they will be put into place, providing stakeholders with a transparent view of the process. Here’s how to construct your roadmap:- Categorize the recommendations: Identify which ones are essential for a healthy and robust dbt environment and which are beneficial but not as critical. This distinction helps prioritize tasks and manage resource allocation effectively.

- Define the scope and assign responsibility: For each recommendation, determine its impact and scope. Assign a team member to oversee its implementation, ensuring there’s accountability and systematic execution.

- Prioritize the changes: Start implementing the most impactful recommendations first and then work down the list. This approach ensures that the most critical issues are addressed promptly.

- Include training and continuous learning: If new features or capabilities are recommended, include training sessions for the team. This ensures everyone is up-to-date and can fully utilize dbt’s capabilities.

- Plan for future evaluations: Finally, schedule periodic reviews, preferably every 12 months, to ensure your dbt jobs continue to meet performance standards and scales effectively.

Watch the discussion—Steps to Optimize Your dbt Implementation

As dbt projects grow in size and complexity, you can risk inefficiencies and suboptimal practices.

Case Study: Preparing for Success with a Proactive dbt Health Check for a National Insurance Company

Setting the Stage for Scalable Data Management As a national insurance company geared up for rapid growth and faced increasing data complexities, they recognized the need to establish a solid foundation for their data management system. Before launching their system into production, the company aimed to ensure that their data environment was ready to scale effectively and meet their future requirements. Our approach focused on a comprehensive dbt health check to guide them in this critical phase. In the health check, we:- Conducted a thorough review of their planned data ingestion, replication, and dbt project configurations.

- Evaluated their proposed CI/CD processes and code versioning strategies.

- Analyzed their planned setups for Snowflake, dbt, SSIS, and Fivetran.

- Examined their intended orchestration, monitoring, and documentation practices.

- Conducted interviews with developers to gain deeper insights and perspectives.

Talk With a Data Analytics Expert

Key Takeaways

- A dbt health check is essential for evaluating the quality and performance of your dbt project, offering insights into improvement and optimization.

- As dbt projects expand, inefficiencies can emerge, impacting collaboration and decision-making, underscoring the importance of regular health checks.

- Neglecting regular dbt health checks can result in missed opportunities to utilize new features, lack of standard practices, and redundant work.

- Our 6-step approach to a dbt health check includes stakeholder interviews, infrastructure review, data modeling examination, assessment of development strategy, review of orchestration and monitoring, and building a remediation roadmap.

- Stakeholder interviews provide high-level insights into project impact and developer experience, while infrastructure reviews ensure robust and well-configured environments.

- Examining data modeling involves assessing data staging, SQL code quality, and code reuse to ensure efficiency and adherence to best practices.

- A remediation roadmap prioritizes recommendations, assigns responsibilities, and includes training to promote continuous improvement and adherence to best practices.