Last updated on May 6, 2026

Getting the Semantic Model & Layer Right in the Age of AI

By Sharon Rehana

AI agents are only as intelligent as the context behind your data, and for most organizations, that context is scattered, inconsistent, and invisible to the systems that need it most. Closing the AI readiness gap requires building a foundation that starts with defining what your data means, organizing it into a semantic model, and operationalizing it through a semantic layer.

I sat down with Christina Salmi, VP of Data Strategy at Analytics8, to understand what a good semantic foundation looks like and how to get it right.

1. What is the relationship between context, a semantic model, and a semantic layer — and why does getting that sequence right determine whether your AI is ready to generate measurable business value?

Think of context, a semantic model, and a semantic layer as a sequence — three distinct but connected layers, each one building on the last. Different data analytics tools in the market combine them in different ways, which is part of why the conversation gets complicated. But understanding what each one does and why the order matters is what separates organizations that get real value from AI from those still wondering why their pilots aren’t scaling.

Context

Context is the business knowledge that should accompany every data point: its definition, where it came from, how it’s calculated, what it means specifically at your organization, synonyms, and data quality ratings. Every company has nuances, and for a long time there was no good place to capture them. That knowledge lived in people’s heads, which always created a bottleneck where you had to find the one person who knew.

The Semantic Model

The semantic model is how you give that context structure, moving it from conversations and institutional knowledge into a formalized representation of how your business works. This is different from a data model, which defines how data is physically organized and stored. A data model reflects how data is stored. A semantic model reflects how the business thinks. It layers on definitions, relationships, hierarchies, and organizational nuances that don’t fit into a physical table structure.

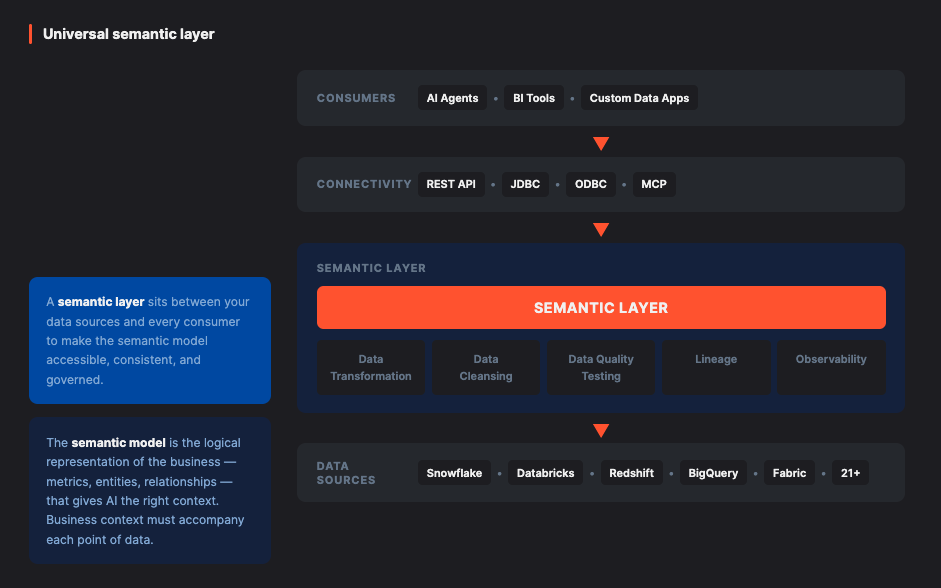

The Semantic Layer

The semantic layer is where all that context becomes accessible. It is the consistent layer that ensures every system consuming your data, whether a BI tool, an AI agent, or a dashboard, gets the same context every time.

The reason the sequence matters is that you can have the right technology for a semantic layer, but if you haven’t defined and organized your context into a sound semantic model, the layer has nothing meaningful to surface. AI makes this more urgent. A person can usually tell when something feels off and ask a follow-up question. AI moves at a pace and scale where bad context produces wrong answers everywhere, consistently, until someone catches it.

It’s also worth understanding this sequence from a practical standpoint. Different tools in your stack are built to serve different points in this lifecycle. Some are designed to capture context, others to maintain and govern it, and others to make it consumable by BI tools, dashboards, or AI agents. When teams treat context, semantic model, and semantic layer as one undifferentiated thing, it becomes hard to see where each tool fits, where capabilities overlap, or where the real gaps are. Separating them gives you a framework for matching the right tool to the right job across the lifecycle.

2. What are the signs that your AI readiness problem is really a semantic foundation problem?

Many of the signs are the same ones we’ve been seeing in analytics for years:

Most of the time this is not a data quality problem. It is a context problem. People are measuring slightly different things for different purposes, and without a shared definition, documented logic, or any way to surface the difference, it looks like a conflict.

Another clear signal is low self-service adoption. If your organization has the tools for self-service analytics but people still aren’t using them, that’s almost always a context problem. Users don’t feel confident because they don’t understand what they’re looking at or whether they’re pulling the right metric.

When AI enters the picture, these challenges surface faster and with far less room for error:

The biggest risk we face as AI becomes more central to business operations: a person can sense when something is off and ask a follow-up question. But AI operates at a pace and scale where bad context produces wrong answers everywhere, consistently, until someone catches it.

3. Most organizations have business context scattered everywhere — YAML files, wikis, spreadsheets, someone’s head. How do you start pulling that together into something AI can use?

1. Start with a use case that has clear purpose and value, something where you can demonstrate the benefit of having solid business context all the way through to how it surfaces for users or AI. That becomes your proof of concept and your template. The context you capture and structure for that first use case does not stay isolated — it becomes part of a growing foundation that other use cases can build on, and to make every future effort more consistent.

2. Utilize technology to capture and centralize context so multiple people can contribute and maintain. Modern technologies , such as Coginiti, dbt Semantic Layer, ThoughtSpot Spotter Semanitcs, Atlan, and Power BI semantic models, have made this quicker and easier for everyone. Non-technical stakeholders can now contribute feedback directly inside the dashboards they already use. Business users can flag what does not look right. Data engineers can update calculations when something changes in the source system.

You want your context source to be a living, maintained body of knowledge rather than a one-time exercise.

3. Know that you do not need to build a full enterprise ontology before you start. Simple hierarchies are a completely valid starting point. The goal is to build toward a semantic layer where that context flows automatically and consistently to any tool or AI agent that needs it. Ontologies are a means, not the goal. What matters is choosing an approach to organizing context that fits your organization today and can scale as your needs evolve.

4. Building a semantic layer that works across an entire business requires alignment between people who rarely agree — data engineers, BI teams, and business stakeholders. How do you get everyone working from the same definition of truth?

The first step is understanding what everyone is trying to accomplish, because what looks like a conflict over the same metric is often just two teams measuring different things for different purposes. That distinction matters because the solution is different in each case. Sometimes it is the same metric with two different names, and the fix is simply adding context that maps them together. Other times the definitions are genuinely different, and the right answer is to document both clearly and stop treating the difference as a problem.

An effective solution for conflict resolution is to bring everyone together and walk through the actual logic behind the metric. When people see all the layers of calculation that go into producing a number, a lot of the tension resolves on its own.

They realize the other team’s version is not wrong. It is just answering a different question.

Most organizations do need a company-wide standard for their most critical metrics, and that requires executive sponsorship. Someone needs the authority to say this is the default definition, and here is where the variations live. That top-down reinforcement is important, but it lands much better when shared understanding has already been built from the ground up.

A tip to consistently enforcing your hard-earned definitions: tools like ThoughtSpot, Sigma, Looker, Tableau and Power BI now allow teams to surface context and definitions directly inside the dashboards people are already using (i.e. documentation does not live in a separate portal that nobody visits).

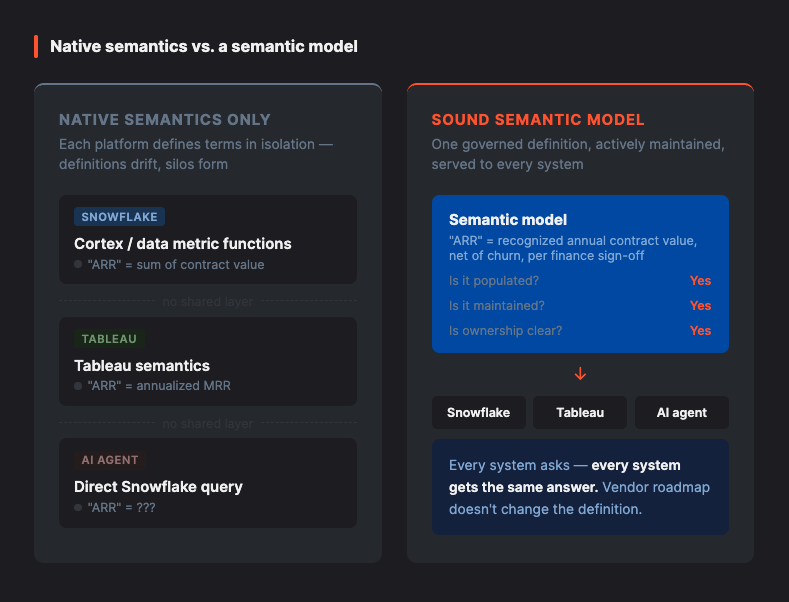

5. Many tech platforms offer their own native semantics. Why do you still need to build a sound semantic model?

Having a platform with native semantics and using it are two different things. The first question to ask is not whether your platform has a semantic layer. It is whether you are populating it, whether you have a process for maintaining it, and how you are deciding what goes in there. The feature being available does not mean you are getting the value.

Most enterprise organizations are also not running on a single platform. If your semantic layer is tied to one tool, it is only serving the users and systems within that tool’s reach, and you are dependent on that vendor’s roadmap for whatever comes next.

This is where a universal semantic layer becomes the more practical answer. Tools like Coginiti are built specifically to sit across your existing infrastructure, whether that’s a single platform or a combination of several — Snowflake, Databricks, Google BigQuery, on-prem systems, and more. You define your business logic and context once, and it flows consistently to every platform, analytics tools (like PBI and Tableau), AI agent, and consumer downstream.

That is the difference between a semantic layer that serves one tool and one that serves the entire organization: you are not replacing what you’ve already built; you’re making all of it work together.

6. For a business starting from scratch on creating a semantic foundation, what does a realistic timeline look like — and what determines how fast they can move?

The most important mindset shift is to stop thinking about a semantic foundation as a project with a single finish line. It is a culture change as much as a technology change. It involves multiple people, it integrates into how teams work, and it takes time to stick.

The approach that works is agile, not waterfall. Break it into manageable pieces, each with end-to-end value you can demonstrate. That keeps the work grounded in real business outcomes and stakeholders engaged. You want to ship something useful, learn from it, and build on it.

That does not mean skipping the strategy. Knowing where you want to end up is what allows you to make smart decisions on each smaller piece. Every project you do, you do with that bigger picture in mind, building the structure and consistency that will serve you as you scale.

7. How does an organization benefit when AI finally has the context it needs?

The organizations seeing the most value from AI share a common foundation: solid context, a well-defined semantic model, and a semantic layer that makes it all accessible. When that is in place, adoption follows naturally. People across the business trust the data because they understand it, and that trust extends to AI. They are not afraid of it. They know how it is being used, guardrails are in place, and when AI surfaces a recommendation, the context comes with it. Not just the answer, but how it got there.

8. This isn’t work most organizations can do alone. What does the right outside partner bring to this process that an internal team can’t?

The right partner brings more than technical expertise. They bridge the gap between business and technology, bring patterns from working across hundreds of organizations, and know how to surface the tribal knowledge that no tool can find on its own.

That is the work Analytics8 has been doing for more than 20 years, exclusively in data and AI, across healthcare, financial services, CPG, retail, and beyond. We bring the strategic and technical depth to meet organizations wherever they are in the journey, and we use our AI-powered delivery framework, Accelr8, to dramatically accelerate the discovery and context-gathering work that used to take months. We are not generalists. Data is our entire practice, which means every engagement draws on a depth of experience that is hard to find in a single partner.

Most importantly, we build capability into your team so that the work compounds long after the engagement ends.